Self-Driving Cars and Localization

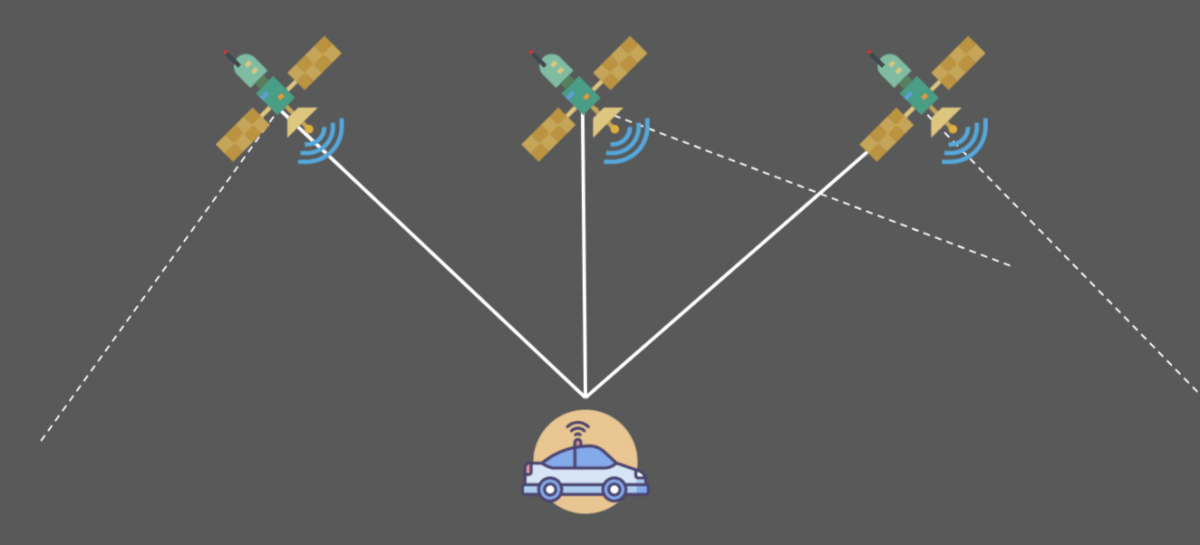

In a self-driving car, GPS (Global Positioning Systems) use trilateration to locate our position and perform the task we call localization.

In these measurements, there may be an error from 1 to 10 meters. This error is too important and can potentially be fatal for the passengers or the environment of the autonomous vehicle. We therefore include a step called localization.

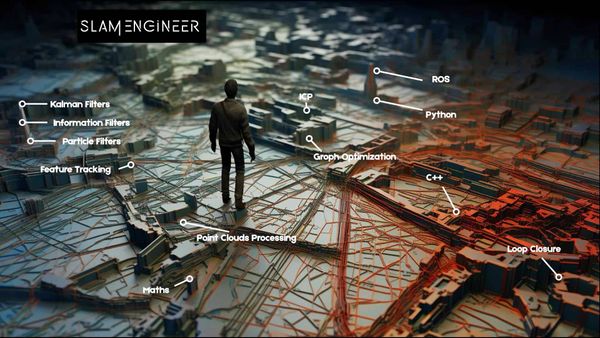

📩 Before we start, I invite you to join the daily emails on autonomous tech and receive the Self-Driving Car Engineer Mindmap that will tell you how to use Localization (and other things) in self-driving cars.

How to locate precisely?

There are many different techniques to help an autonomous vehicle locate itself.

- Odometry — This first technique, odometry, usesa starting position and a wheel displacement calculation to estimate a position at a time t. This technique is generally very inaccurate and leads to an accumulation of errors due to measurement inaccuracies, wheel slip, ...

- Kalman filter — The previous article evoked this technique to estimate the state of the vehicles around us. We can also implement this to define the state of our own vehicle.

- Particle Filter — The Bayesian filters can also have a variant called particle filters. This technique compares the observations of our sensors with the environmental map. We then create particles around areas where the observations are similar to the map.

- SLAM — A very popular technique if we also want to estimate the map exists. It is called SLAM (Simultaneous Localization And Mapping). In this technique, we estimate our position but also the position of landmarks. A traffic light can be a landmark.

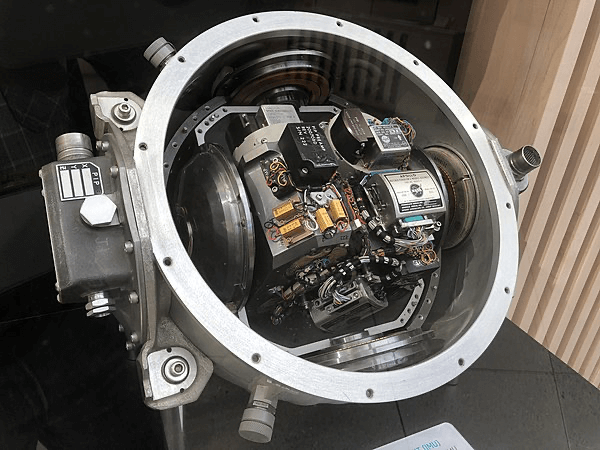

Sensors

- Inertial Measurement Unit (IMU) is a sensor capable of defining the movement of the vehicle along the yaw, pitch, roll axis.

This sensor calculates acceleration along the X, Y, Z axes, orientation, inclination, and altitude.

- Global Positioning System (GPS) or NAVSTAR are the US system for positioning. In Europe, we talk about Galileo; in Russia, GLONASS. The term Global Navigation Satellite System (GNSS) is a very common satellite positioning system today that can use many of these subsystems to increase accuracy.

Vocabulary

We will introduce several words in this article :

- Observation — An observation can be a measurement, an image, an angle …

- Control — This is our movements including our speeds and yaw, pitch, roll values retrieved by the IMU.

- The position of the vehicle — This vector includes the (x, y) coordinates and the orientation θ.

- The map — This is our landmarks, roads … There are several types of maps; companies like Here Technologies produce HD Maps, accurate maps centimeter by centimeter. These cards are produced according to the environment where the autonomous car will be able to drive.

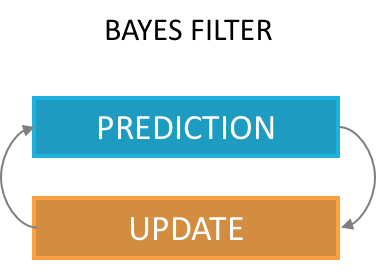

Kalman Filters

Explained in the previous article , a Kalman filter can estimate the state of a vehicle. As a reminder, this is the implementation of the Bayes Filter, with a prediction phase and an update phase.

Particle Filters

A Particle Filter is another implementation of the Bayes Filter.

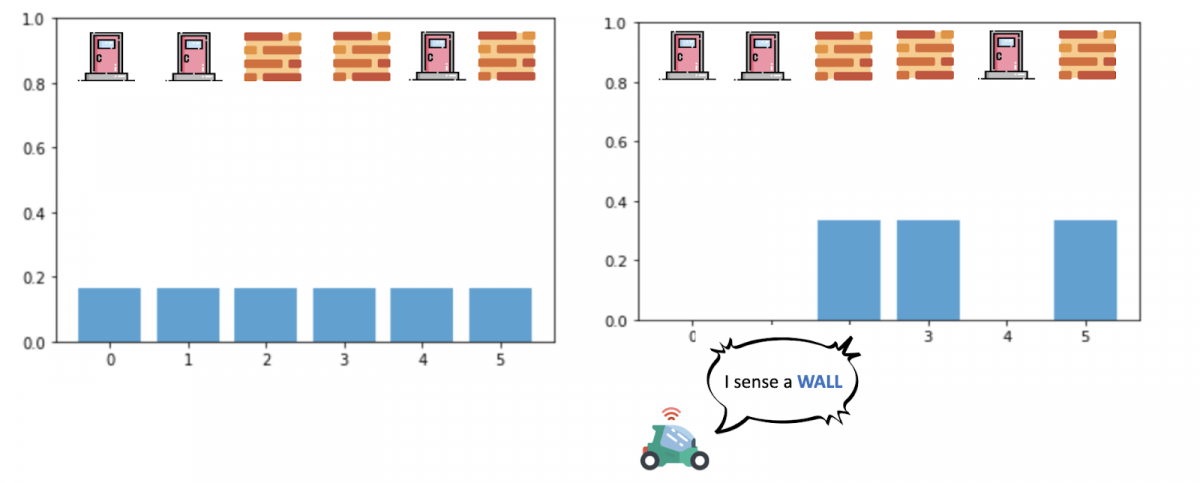

In a Particle Filter, we create particles throughout the area defined by the GPS and we assign a weight to each particle.

The weight of a particle represents the probability that our vehicle is at the location of the particle.

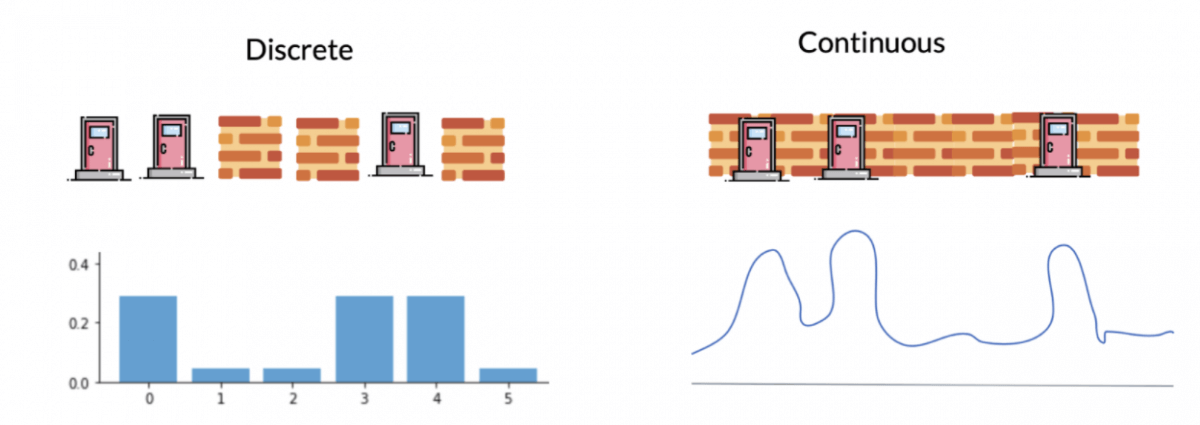

Unlike the Kalman filter, we have our probabilities are not continuous values but discrete values, we talk about weights.

Algorithm

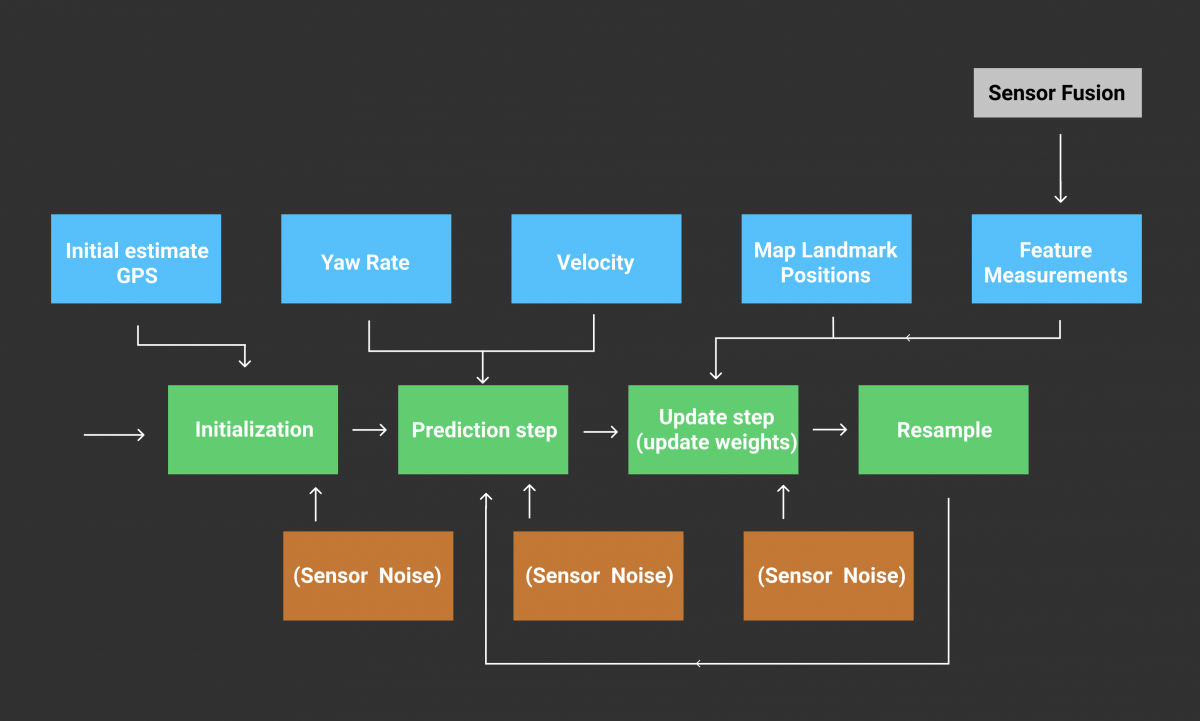

The implementation of the algorithm is according to the following scheme. We distinguish four stages (Initialization, Prediction, Update, Sampling) realized with the help of several data (GPS, IMU, speeds, measurements of the landmarks).

- Initialization — We use an initial estimate from the GPS and add noise (due to sensor inaccuracy) to initialize a chosen number of particles. Each particle has a position (x, y) and an orientation θ .

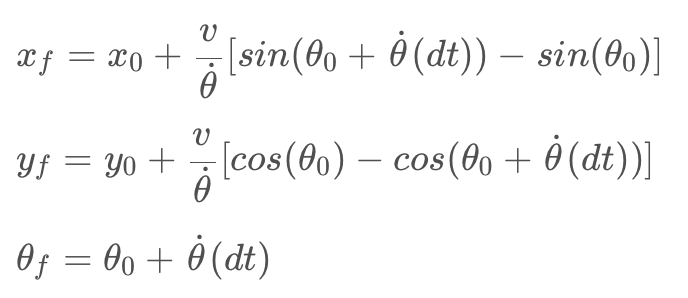

This gives us a particle distribution throughout the GPS area with equal weights. - Prediction — Once our particles are initialized, we make a first prediction taking into account our speed and our rotations. In every prediction, our movements will be taken into account. We use equations describing x, y, θ (orientation) to describe the motion of a vehicle.

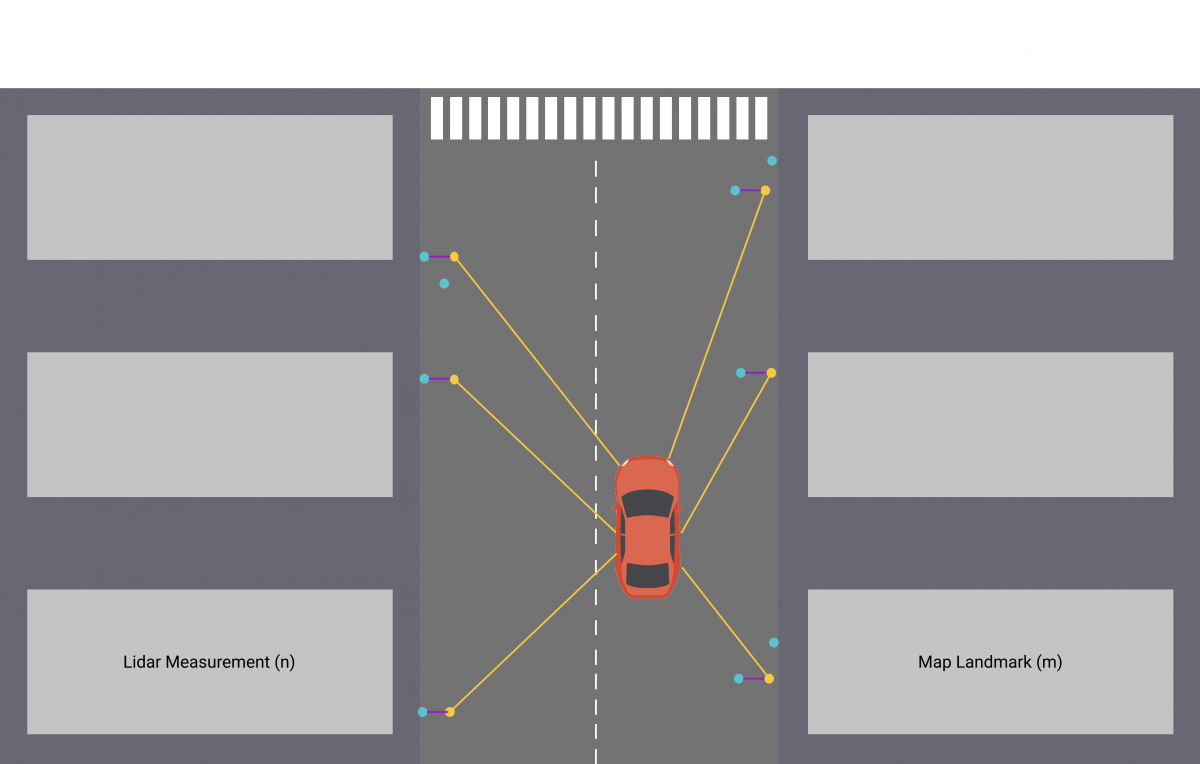

- Update — In our update phase, we first realize a match between our measurements n and the map m.

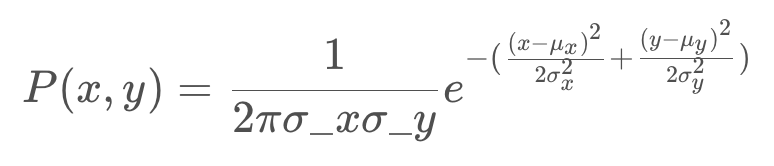

We use sensor fusion data to determine surrounding objects and then update our weights with the following equation :

In this equation, for each particle:

- σx and σy are our uncertainties

- x and y are the observations of the landmarks

- μx and μy are the ground truth coordinates of the landmarks coming from the map.

In the case where the error is strong, the exponential term is 0, the weight of our particle is 0 as well. In the case where it is very low, the weight of the particle is 1 standardized by the term 2π.σx.σy.

- Resampling — Finally, we have one last stage where we select the particles with the highest weights and destroy the least likely ones.

The higher the weight, the more likely the particle is to survive.

The cycle is then repeated with the most probable particles, we take into account our displacements since the last computation and realize a prediction then a correction according to our observations.

Particle filters are effective and can locate a vehicle very precisely. For each particle, we compare the measurements made by the particle with the measurements made by the vehicle and calculate a probability or weight. This calculation can makes the filter slow if we have a lot of particles. It also requires having the map of the environment where we drive permanently.

Results

My projects with Udacity taught me how to implement a Particle Filter in C ++. As in the algorithm described earlier, we implement localization by defining 100 particles and assigning a weight to each particle through measurements made by our sensors.

In the following video, we can see :

- A green laser representing the measurements from the vehicle.

- A blue laser representing the measurements from the nearest particle (blue circle).

- A particle locating the vehicle (blue circle).

- The black circles are our landmarks (traffic lights, signs, bushes, …) coming from the map.

SLAM (Simultaneous Localization And Mapping)

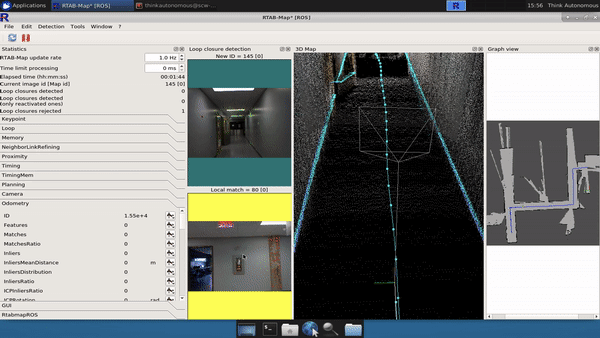

Another very popular method is called SLAM, this technique makes it possible to estimate the map (the coordinates of the landmarks) in addition to estimating the coordinates of our vehicle.

To work, we can with the Lidar find walls, sidewalks and thus build a map. SLAM’s algorithms need to know how to recognize landmarks, then position them and add elements to the map.

A SLAM algorithm is based on either a Kalman Filter, a Particle Filter, or an Information Filter (inverse of a Kalman Filter). If you’d like to learn more about this, I invite you to take my course THE GRAND SLAM: Hit Your Robotics Goals with Simultaneous Localization And Mapping.

Conclusion

Localization is an essential topic for any robot or autonomous vehicle. If we can locate our vehicle very precisely, we can drive independently. This subject is constantly evolving, the sensors are becoming more and more accurate and the algorithms are more and more efficient.SLAM techniques are very popular for outdoor and indoor navigation where GPS are not very effective. Cartography also has a very important role because without a map, we cannot know where we are. Today, research is exploring localization using deep learning algorithms and cameras.

Now that we are localized and know our environment, we can discuss algorithms for creating trajectories and making decisions!

📩Before I conclude, I invite you to join my daily emails where I teach TONS of other self-driving car algorithms, and receive your Self-Driving Car Engineer MindMap that will describe the jobs, skills, and algorithms in a self-driving car!

Next, let's learn about Planning for self-driving cars!